Visualizing database using Minimal Modeling, pt. II

In the previous post, “How to visualize your database using Minimal Modeling”, we showed how to present parts of your database visually, represented as simple graphs.

I received some questions and comments, which I’m going to answer here. First, who is it for?

Minimal Modeling for data analysts

Minimal model is immediately useful for people who want to get some information from the database: data analysts, data scientists, software developers. Minimal model can tell you how to query the existing data.

Describing how the data gets into the database is out of scope for this series. This is a very important topic, of course: when something happens in the real world (customer places an order, somebody files a mortgage application, user clicks the “like” button) — what needs to be inserted/updated in the database tables to reflect this? We’ll talk about documenting the workflows some other time.

To try and answer that I’m going to present a simple data analysis case study. We’ll see how the minimal model helps with the data analysis. Then we’ll talk about how this scenario could be applied in practice. Then we’ll show actual visualization of the data model that we used.

Case study: “most interesting posts”

Imagine that I ask you to find the most interesting posts on Mokum for the past month. We define “most interesting” as:

Having at least 10 likes,

Or, having at least 10 comments left by at least 5 users,

Or, added to favorites by at least 10 users,

Or, having a comment with at least 10 likes.

Of course, some posts may be private, but we’ll apply the permissions check separately. For now let’s focus on data.

I point you to the minimal model of Mokum database (at the time of writing it covers most of the most important data): https://mokumplace.notion.site/Case-study-Mokum-f2001712e8424acc9ed9b3d97e482a53, but I want you to be as efficient as possible, so I tell you straight away about the pieces of data you would be interested in:

post__published_at attribute (link);

User likes Post link (link);

User writes Comment link (link);

Post has Comments link (link);

User favs Post link (link);

User likes Comment link (link);

User anchor (link);

Post anchor (link);

Comment anchor (link);

(One can’t help but notice that it would be nice if the word “link” did not have multiple meanings, but unfortunately that ship has already sailed.)

So, now you know about one attribute, five links and three anchors that I think you would need to work on the task.

One of the most useful pieces of information that you get from the links above is in the “Query” column. It shows the SQL query fragment that you can to get the actual data. For example, for “User favs Post” we have the following query:

SELECT user_id, post_id

FROM favs

You can use this query for data exploration: you can add “LIMIT 100”, execute it against the development database and confirm that the resulting dataset actually looks like what you need. You can also reuse this query as part of the larger query, the one that you’re busy writing.

So, after you apply your mad SQL skills and creatively combine the nine subqueries listed above, we have the result we’ve been looking for. What’s more, you saved a lot of time because you did not have to search through the raw database schema (https://mokumplace.notion.site/Mokum-SQL-schema-71b2ac0530cb4a3e9202653ec756a432).

Also, now you know a bit more about the Mokum database.

Real-world considerations

Of course, the scenario described above is suspiciously happy. Let’s see what we could encounter in practice. (We return to the visualization in the following section, skip this one if you want.)

1/ I could be busy so I only send you a link to the minimal model, without telling you about specific anchors/links/attributes. You will have to read it through, looking for the pieces of data that look relevant. You can also use full-text search looking for words like “like”, “comment”, “user”, etc.

I just did this experiment, using Notion search, and it basically works, it shows pretty relevant content. (Of course, I’m heavily biased because I know a lot about the domain and the model and schema.) One thing that I see is that you can’t find anything if you search for “favorite” (you need to search for “fav”); I’m going to fix this problem by next week.

2/ The SQL queries provided in the documentation may not be the optimal way to query some data. For example, it may be that we have cached columns such as “post_counters.likes_count”. Some data may even not be accessible as SQL (maybe we need to use some sort of internal API for that).

This, however, could also be described as part of the minimal model. It’s perfectly normal to have the same data in multiple representations, for example:

The primary relational database (“

likes” table) — this is the source of truth;A table in the data lake, copied from the primary DB, maybe converted into some kind of star schema;

Cached counter column, optimized for some common needs, such as ours;

Heavily-optimized JSON representation that is used to render posts when serving the actual website pages (coincidentally, this is how it works in Mokum);

Non-SQL representation, e.g. available from an internal microservice as a JSON API;

Etc, etc.

Anchors, attributes and links are used to document primary data (the source of truth). Other representations are documented separately. For example, see the “Mokum secondary data” page, especially rows marked as “Pre-aggregated value”.

3/ The minimal model could be incomplete (and certainly would be incomplete, unless you put in some non-trivial effort). Of course, having 100% coverage would be nice, but you can’t really expect that. Normally the minimal model would be accumulated over the weeks, months and years, by different people, attribute by attribute.

So, what if only half of the data is described in the minimal model? Well, you fall back to researching the table schema. You use Ctrl-F a lot, you ask around, you explore the data: basically you do the usual work that everyone does. But now you have a place where you can document your findings. You just have to add a new row to the minimal model, and this row is very well structured.

For example, to document a link you have to fill in nine or so columns:

Sentence (“User likes Post”);

Anchor 1 and 2;

Query;

Cardinality (1:N, N:M);

Audit columns (such as timestamps, “who changed it”, etc.);

Description, Remarks, Attributes (more on that later);

That’s it. You’ve already done all the work needed to use this data, just write it down here, and the next person will not have to spend this time again.

Visualizing the “most interesting posts” data model

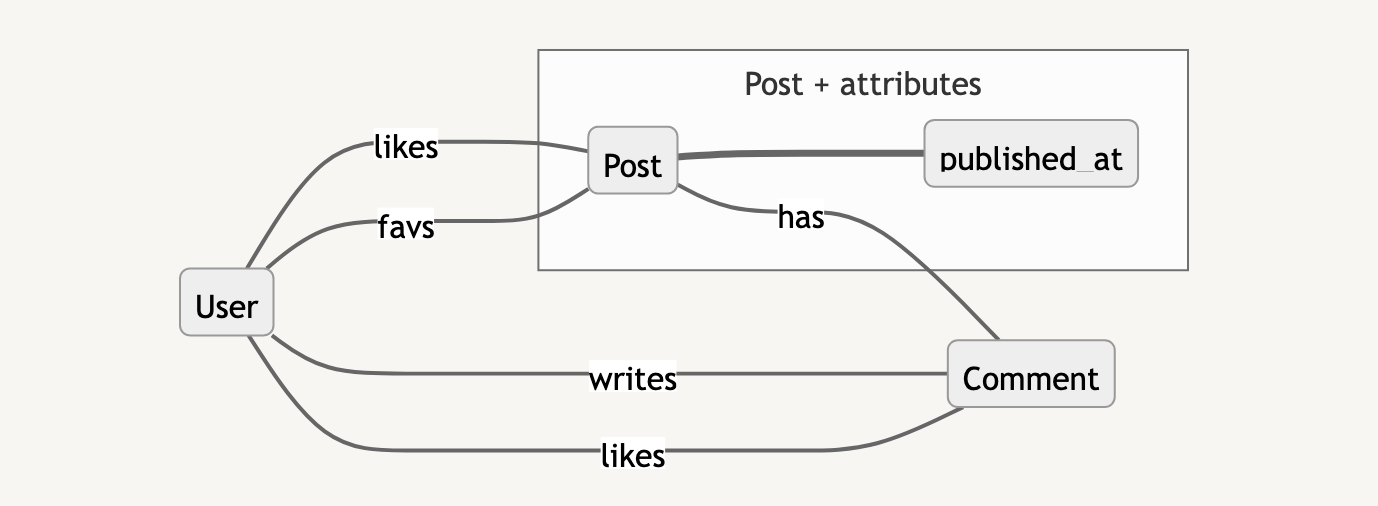

We may now want to draw a small picture that shows all the data needed to find the most interesting posts. Allow me to present this sketch:

It’s clear that visual representations like this are very low-resolution, information-wise. You must refer to the minimal model, presented in tabular and textual format, to get the detailed information. You also have to refer to the textual description of the task at hand, where you find details such as “what is the threshold of the number of likes”.

Visualizations like this, however, can help assess the scope. Remember that above we listed 1 attribute, 5 links and 3 anchors as relevant to the task at hand. You can confirm that they all are in this picture: five labeled arrows, one attribute rectangle (beginning with the lowercase letter), three anchor rectangles (beginning with the uppercase letter).

A lot could be improved in the pictures, certainly, from the point of view of graphics design. However, it is important to first understand:

what you’re trying to achieve with the images;

what information do they carry;

what information is deferred to the main document and to the data catalog.

This is especially important because those pictures are created in real world conditions. Preparation process needs to be scalable (basically, most people should be able to create them). Also, it should be clear how to update the images when the corresponding dataset changes.

To be continued…

Next week we’ll discuss:

how the traditional, table-based visualizations look in comparison with this approach;

what tools can be used to create visualizations as presented above.